องค์กรที่สร้าง competitive advantage ผ่านคนของตัวเอง ไม่ได้ใช้งบพัฒนาคนมากกว่าองค์กรอื่น

แต่พวกเขาใช้งบไปกับ พฤติกรรมที่ถูกต้อง — วัดผลจากภายนอก โดยคนที่เห็นพฤติกรรมเหล่านั้นทุกวัน

เราจะรู้ได้อย่างไรว่า เราต้องพัฒนาใครบ้าง ในเรื่องอะไร และเมื่อพัฒนาแล้ว เราจะรู้ได้อย่างไรว่าจะได้ผล

หลายครั้งคุณออกแบบโปรแกรมมาอย่างดี วัดความพึงพอใจ ทดสอบความรู้ ทำทุกอย่างถูกต้อง แล้วส่วนให — ก็ไม่มีอะไรเปลี่ยน นี่คือเรื่องราวที่พบบ่อยที่สุดใน leadership development และมันไม่ใช่ปัญหาของการอบรม หรืออาจารย์ผู้สอน มันย้อนไปก่อนหน้านั้นอีก

องค์กรที่สร้างขีดความสามารถของผู้นำได้อย่างมีประสิทธิภาพและยั่งยืนที่สุด ไม่ได้มีปริมาณ training หรือ learning program มากกว่า แต่พวกเขาสร้างสภาพแวดล้อมให้ได้ฝึกใช้ และมีการยืนยันผ่าน feedback ที่ดีกว่า — ทำให้ผู้นำเห็นตัวเองได้ชัดเจน และการพัฒนาที่มีนัยยะที่ส่งผลต่อประสิทธิภาพการทำงานได้ดีขึ้น

ลองถามตัวเองด้วย 5 คำถามที่หลายองค์กรไม่เคยถามจริงๆ แต่ละข้อยากกว่าข้อก่อน

คำถามที่ 1 : กลยุทธ์ที่สำคัญขององค์กรคืออะไร และ gaps ของบุคคลากรทั้งในระดับผู้นำ และพนักงานคืออะไร? ข้อนี้สำคัญที่สุดสำหรับการวางแผนโปรแกรมต่างๆ ซึ่ง HR ต้องทำการบ้านร่วมกับผู้บริหารเพื่อวิเคราะห์และหากลยุทธ์ร่วมกัน ในหลายองค์กรอาจมีกลไก หรือ process ช่วยสนับสนุนอยู่บ้างเช่น Strategic Workforce Planning ที่ connect กับ Business Plan, Talent Management, Succession Plan, Organization Development Plan, People Risks & Rewards เป็นต้น แต่ถ้าไม่มี HR ควรตั้งเป็นกิจกรรมประจำปี อย่างน้อยปีละ 1-2 ครั้งเพื่อช่วยกันประเมินและวางแผนการพัฒนาองค์กร่วมกัน การยึดโยง (align)ทุกกิจกรรมเข้ากับเป้าหมายทางธุรกิจ จะทำให้เราพิสูจน์ประสิทธิภาพของโปรแกรมการพัฒนาต่างๆ ที่เรา implement ได้ ถึงแม้จะเป็นในด้าน soft หรือมิติที่ไม่ได้วัดได้ด้วยตัวเลขทางธุรกิจโดยตรง เราก็ยังสามารถวางดัชนีชี้วัดโดยอ้อม indirect index ได้เช่นกัน เช่น engagement level, turnover rate. และที่สำคัญ เราจะได้ early buy-in เป็นแรงสนับสนุนจากผู้บริหาร และแสดงให้เห็นถึงคุณค่า Value ของงานการพัฒนาบุคคลากรได้อย่างชัดเจน

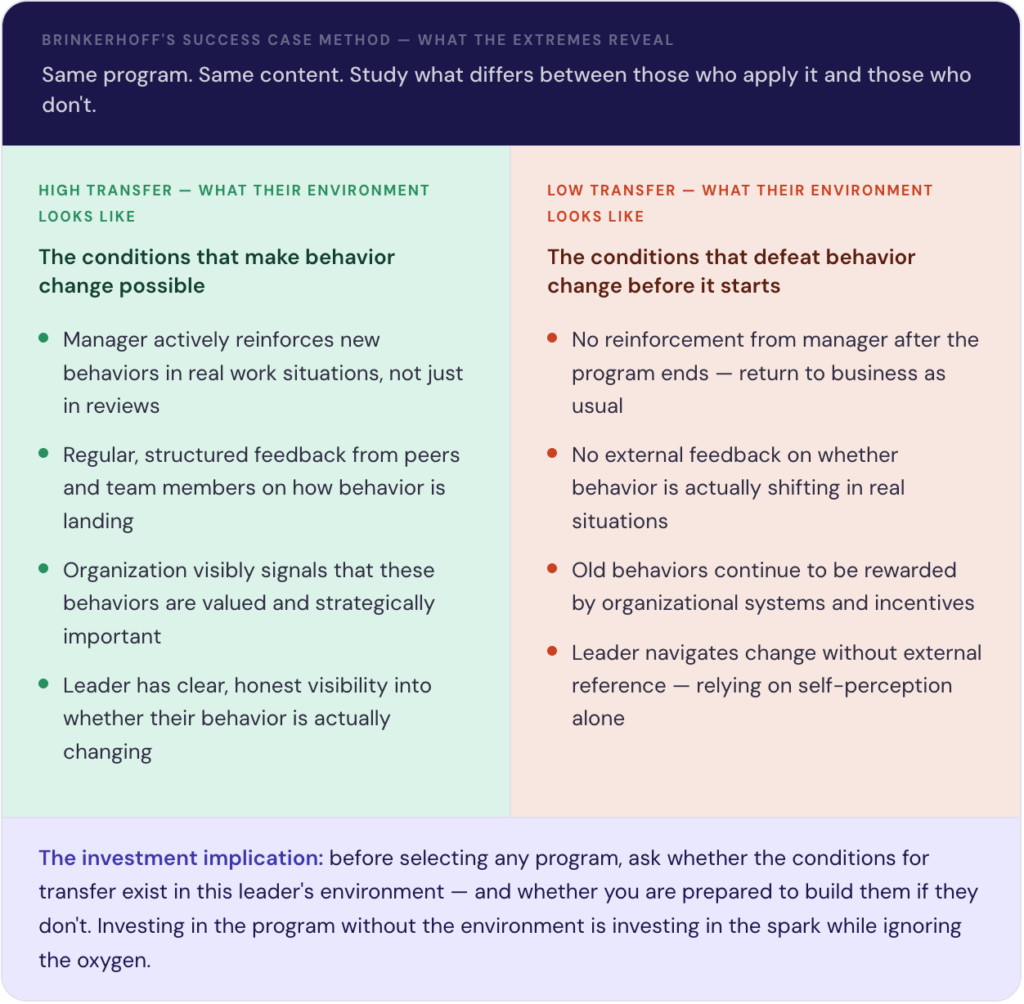

คำถามที่ 2: คุณกำลังออกแบบ “โปรแกรม” — หรือออกแบบ “สภาพแวดล้อม”? งานวิจัยของ Brinkerhoff ชัดเจน: ความแตกต่างระหว่างผู้นำที่เปลี่ยนแปลงจริงกับคนที่ไม่เปลี่ยน แทบไม่เคยมาจากตัวหลักสูตร แต่มาจากสิ่งที่รอบตัวพวกเขาในที่ทำงาน ผู้เข้าอบรม หรือ ผู้รับการโค้ช มักจะทำได้ดีในของอบรม หรือต่อหน้าโค้ช แต่นั่นไม่ได้แปลว่าเขาจะทำ และทำได้ดีเมื่อกลับเข้าสู่การทำงานจริง ดังนั้นการสร้างสภาพแวดล้อมให้เกิดการฝึกใช้จนเป็นพฤติกรรม โดย การเสริมแรงจากหัวหน้า, feedback จากเพื่อนร่วมงาน และการสื่อสารจากองค์กรเพื่อส่งสัญญาณว่าพฤติกรรมใหม่นั้นสำคัญอย่างไร

โปรแกรมการอบรม หรือ Coaching คือประกายไฟ แต่สภาพแวดล้อมคืออากาศ สองส่วนนี้ต้องประกอบกัน แต่ส่วนใหญ่ลงทุนกับประกายไฟ แล้วลืมเรื่องอากาศไปเลย

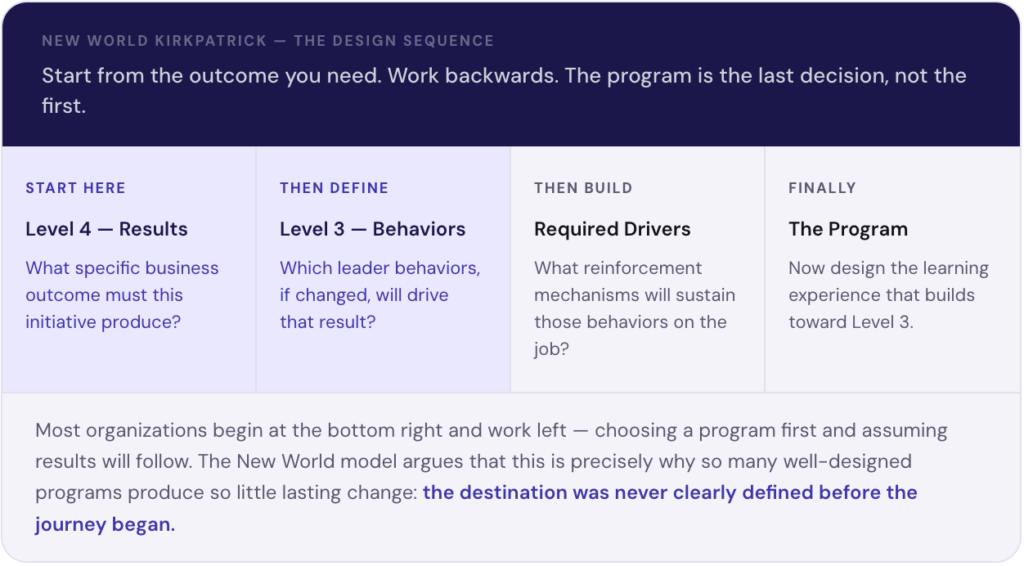

คำถามที่ 3: คุณออกแบบไปข้างหน้า — หรือออกแบบย้อนกลับ? New World Kirkpatrick Model เรียกร้องสิ่งที่กระบวนการวางแผน L&D ส่วนใหญ่ยังทำไม่ได้ คือการเริ่มจาก Level 4 (ผลลัพธ์ธุรกิจ) นิยาม Level 3 (พฤติกรรมที่จะสร้างผลนั้น) สร้าง required drivers เพื่อรักษาพฤติกรรม แล้วค่อยออกแบบโปรแกรม

แต่หลายองค์กรทำในทางตรงกันข้าม เลือกหลักสูตรก่อน แล้วหวังว่าพฤติกรรมจะตามมาเอง มักไม่เป็นเช่นนั้น เพราะไม่เคยกำหนดปลายทางก่อนเดินทาง เช่นเดียวกับการตั้งจุดหมายปลายทางบน GPS ซึ่งจะช่วยให้เราเลือกเส้นทางที่ดีที่สุดได้ ก่อนออกเดินทาง

คำถามที่ 4: ถ้าพฤติกรรมเปลี่ยน — ใครจะรู้? ใครกำลังสังเกตว่าพฤติกรรมเปลี่ยนจริงหรือเปล่า? นี่คือคำถามเป้าหมายที่ทุก framework ต้องการมากที่สุด ทั้ง 20% ใน 70-20-10, required drivers ใน New World Kirkpatrick, การยืนยันภายนอกใน LTEM และสภาพแวดล้อม feedback ใน Brinkerhoff ล้วนต้องการกลไกเดียวกัน สิ่งที่รวบรวม perspective ที่ซื่อสัตย์จากทีม เพื่อนร่วมงาน และหัวหน้า ก่อนและหลังการพัฒนา

ถ้าไม่มีกลไกนี้ Level 3 จะมองไม่เห็น ROI เป็นแค่การประมาณ Required drivers ไม่มี feedback loop และสภาพแวดล้อมที่ทำให้การเปลี่ยนแปลงอยู่รอดก็ไม่มีวันก่อตัวได้ครบ

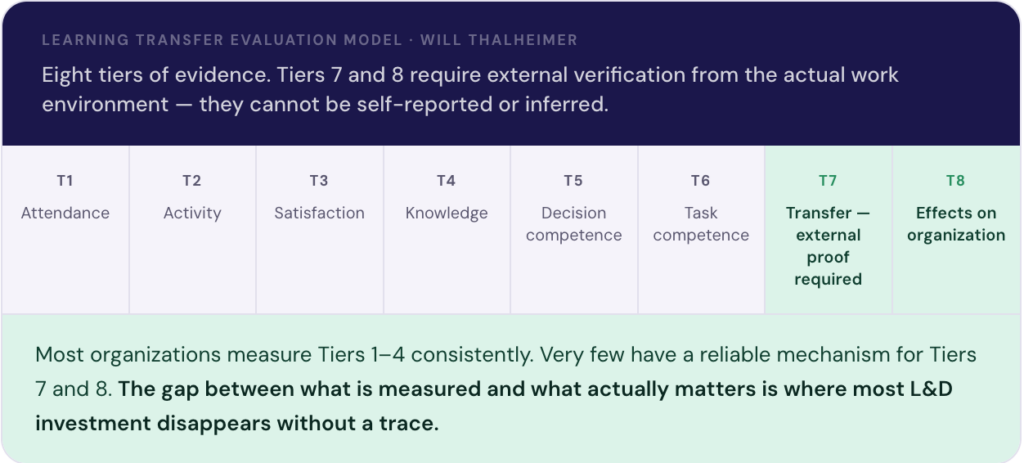

Kirkpatrick Level 3 และ Learning Transfer Evaluation Model (LTEM) ต่างต้องการสิ่งเดียวกันที่หลายองค์กรข้ามไปอย่างเงียบๆ: การยืนยันพฤติกรรมจากภายนอก ไม่ใช่แบบสอบถามความพึงพอใจ ไม่ใช่การทดสอบ ไม่ใช่การประเมินตัวเอง แต่คือการสังเกต จากคนที่ทำงานกับผู้นำทุกวัน

Level 1 และ 2 วัดได้ในห้องที่คุณควบคุมได้ แต่ Level 3 เกิดขึ้นหลังจากนั้น ในการประชุมจริง ภายใต้แรงกดดันจากการทำงานจริง ทีมงานที่ทำงานด้วยจริงๆ และคุณไม่ได้อยู่ที่นั่น

เราพูดถึง framework ต่างๆ ที่ใช้ในงานนี้ 4 ตัวซึ่งสรุปให้เห็นภาพง่ายๆ ตามนี้

| 70-20-10 Model[การออกแบบ] | เราลงทุนงบพัฒนาคนไปในสิ่งที่ถูกต้องหรือเปล่า?” | 70-20-10 Model บอกเราว่าการเติบโตที่แท้จริงของคนเกิดจาก:◾ 70% — ประสบการณ์ในงานจริง◾ 20% — การเรียนรู้จากคนอื่น เช่น feedback การ coaching การสังเกต◾ 10% — การอบรมในห้องเรียนแต่งบส่วนใหญ่ขององค์กรวิ่งไปที่ 10% นั้นคำถามไม่ใช่แค่ “ใช้งบเท่าไหร่” แต่คือ “ใช้งบไปกับสิ่งที่ถูกหรือเปล่า?”ช่วยให้เห็นว่าการพัฒนาที่แท้จริงเกิดจาก ประสบการณ์จริง (70%) และการเรียนรู้จากคนอื่น (20%) มากกว่าการอบรมในห้อง (10%) ใช้เป็นกรอบตัดสินใจว่าควรลงทุนงบไปที่ไหน |

| Phillips ROI Methodology[การพิสูจน์คุณค่า] | การลงทุนพัฒนาคนสร้างผลตอบแทนที่วัดได้จริงไหม?” | Phillips ROI เพิ่มมุมมองด้านการเงินเข้ามา: ก่อนลงทุน ให้นิยามก่อนว่าคุณคาดหวัง พฤติกรรม อะไรเป็นผลตอบแทน — และจะรู้ได้อย่างไรว่าเกิดขึ้นแล้วเมื่อเชื่อมการลงทุนพัฒนาคนเข้ากับพฤติกรรมที่ต้องการ — และพฤติกรรมนั้นเข้ากับผลลัพธ์ธุรกิจ — การคุย ROI จะไม่น่ากลัวอีกต่อไป มันจะชัดเจนในตัวเองกำหนดพฤติกรรมที่คาดหวังก่อนลงทุน แล้ววัด before/after เพื่อแสดง ROI ที่เป็นตัวเลขได้จริง ช่วยให้ HR คุยกับ CFO ด้วยภาษาธุรกิจ |

| Brinkerhoff’s Success Case Method[การเลือกกลุ่มเป้าหมาย] | ทำไมบางคนพัฒนาได้ บางคนไม่ได้ แม้ผ่านโปรแกรมเดียวกัน?” | ศึกษาปลายทั้งสองด้าน (transfer สูงและต่ำ) เพื่อเข้าใจว่าสภาพแวดล้อมรอบผู้นำ ไม่ใช่หลักสูตร คือตัวแปรสำคัญ ช่วยออกแบบเงื่อนไขให้การพัฒนาเกิดขึ้นได้จริงให้คำตอบที่ชัดเจนขึ้น แทนที่จะวัดผลเฉลี่ยของทุกคน ซึ่งมักซ่อนทั้งความสำเร็จและความล้มเหลวไว้ Brinkerhoff เสนอให้ศึกษาจากปลายทั้งสองด้าน — ผู้นำที่นำการเรียนรู้ไปใช้ได้มากที่สุด และน้อยที่สุด แล้วค้นหาว่าอะไรทำให้ต่างกันผลที่พบมีความสม่ำเสมออย่างน่าทึ่ง: ช่องว่างระหว่างคนที่ transfer สูงและต่ำ แทบไม่เคยมาจากเนื้อหาของการอบรม แต่มาจาก สิ่งแวดล้อมที่ผู้นำกลับไปเจอ — คุณภาพของ feedback ที่ได้รับ ว่าหัวหน้าเสริมแรงพฤติกรรมใหม่ไหม และองค์กรส่งสัญญาณว่าพฤติกรรมเหล่านั้นสำคัญจริงหรือเปล่าพูดอีกอย่าง: การพัฒนาไม่ล้มเหลวในห้องอบรม แต่ล้มเหลวในสภาพแวดล้อมที่ผู้นำกลับไป |

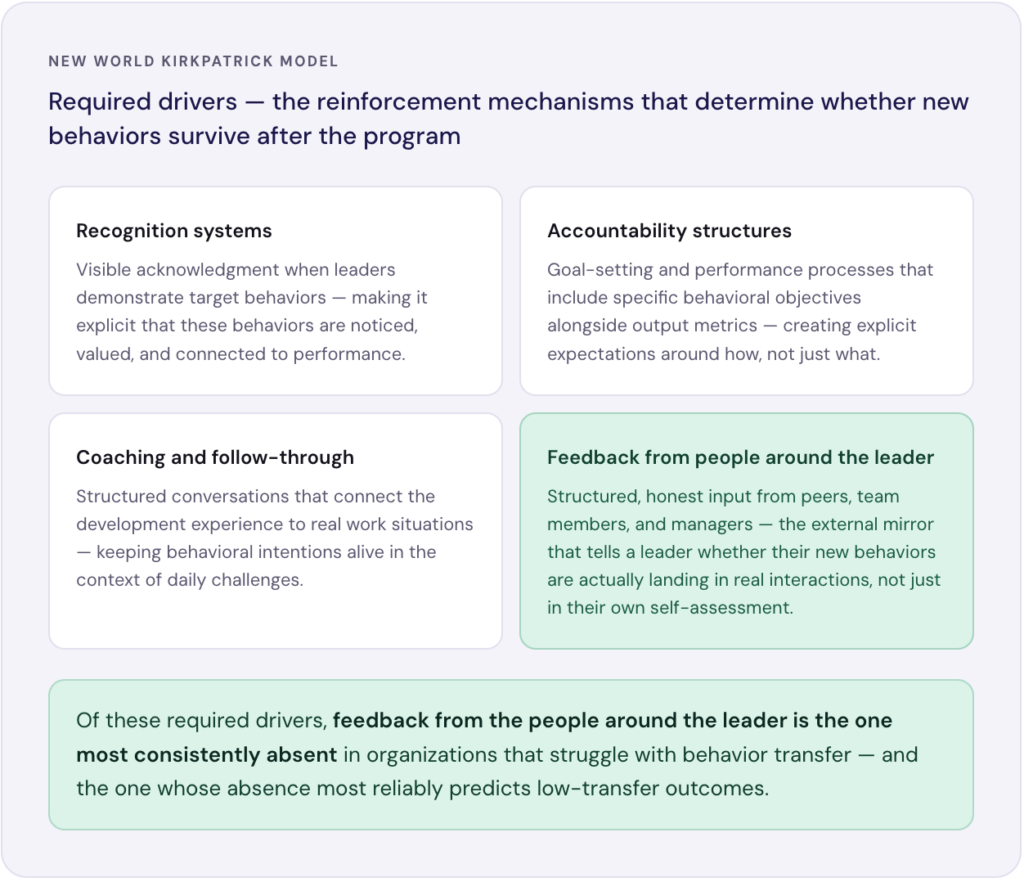

| New World Kirkpatrick Model[การวางแผน] | เราออกแบบโปรแกรมจากผลลัพธ์ที่ต้องการหรือเปล่า?” | สอนให้ออกแบบย้อนจาก Level 4 (ผลธุรกิจ) → Level 3 (พฤติกรรม) แล้วสร้าง required drivers เพื่อรักษาพฤติกรรมใหม่ ไม่ใช่เลือกหลักสูตรก่อนแล้วหวังผลนำ insight นี้ไปใช้ในการออกแบบโปรแกรม หลักการสำคัญ: ออกแบบ ย้อนจากผลลัพธ์ที่ต้องการ ไม่ใช่ออกแบบ ไปข้างหน้าจากเนื้อหาที่อยากสอนแทนที่จะถามว่า “ควรสอนอะไร?” โมเดลนี้ถามว่า “พฤติกรรม Level 3 สำหรับผู้นำคนนี้ในบทบาทนี้ควรเป็นอย่างไร — และต้องมี required drivers อะไรบ้างเพื่อให้พฤติกรรมนั้นเกิดและยั่งยืน?”Required drivers คือกลไกที่ทำให้พฤติกรรมใหม่อยู่รอดหลังโปรแกรมจบ: การยอมรับ ความรับผิดชอบ การ coaching และที่สำคัญที่สุด — feedback จากคนรอบข้างที่ยืนยันว่าพฤติกรรมใหม่นั้นเกิดขึ้นจริงKirkpatrick Level 3 คือจุดที่เกือบทุกองค์กรหมดแรง Level 1 และ 2 วัดได้ในห้อง — แบบสอบถามความพึงพอใจ การทดสอบความรู้ คะแนน engagement แต่ Level 3 เกิดขึ้นหลังจากนั้น ในการประชุมจริง ภายใต้แรงกดดันจริง และคุณไม่ได้อยู่ที่นั่นเพื่อสังเกต แต่มันคือตัวชี้วัดฝีมือของ HR และ L&D ที่แท้จริง |

| LTEM (Thalheimer)[การวัดผล] | “เรามีหลักฐานจากภายนอกว่าพฤติกรรมเปลี่ยนจริงในงานหรือเปล่า?” | Learning Transfer Evaluation Model (LTEM) ของ Dr. Will Thalheimer ทำให้ประเด็นนี้ชัดขึ้นอีก ใน 8 ระดับของหลักฐาน LTEM ระบุชัดว่า: หลักฐานที่มีความหมายของการเรียนรู้ต้องการการยืนยันจากภายนอก ไม่ใช่การประเมินตัวเอง ไม่ใช่การสันนิษฐานของหัวหน้า แต่คือการสังเกตที่มีโครงสร้าง จากคนที่สัมผัสพฤติกรรมของผู้เรียนโดยตรง8 ระดับของหลักฐาน ที่ชัดเจนกว่า Kirkpatrick — ระดับ 7-8 ต้องการการยืนยันจากภายนอกในสถานการณ์งานจริง ไม่ใช่แค่ความพึงพอใจหรือการทดสอบในห้อง |

เราได้พูดถึงแล้วว่า การลงทุนพัฒนาคนควรยึดโยงกับพฤติกรรมที่ต้องการ ว่าพฤติกรรมเหล่านั้นต้องถูกนิยามไว้ที่ Level 3 ก่อนออกแบบโปรแกรม ว่าสภาพแวดล้อมรอบผู้นำ — ไม่ใช่ตัวหลักสูตร — คือสิ่งที่ทำให้ transfer เกิดหรือไม่เกิด และ required drivers ต้องถูกสร้างขึ้นรอบๆ การพัฒนาเพื่อให้พฤติกรรมใหม่อยู่รอด

ทุก framework ล้วนชี้ไปถึง infrastructure เดียวกันในที่สุด คือพฤติกรรม/ทักษะที่สอดคล้องกับกลยุทธ์ขององค์กร

กลไกที่รวบรวม feedback ที่ซื่อสัตย์และมีโครงสร้างจากเพื่อนร่วมงาน ทีมงาน และหัวหน้า — คนที่สัมผัสพฤติกรรมของผู้นำทุกวัน — ทั้งก่อนและหลังการพัฒนา ไม่ใช่เพื่อตัดสิน ไม่ใช่เพื่อจัดอันดับ แต่เพื่อให้ผู้นำเห็นตัวเองจากมุมมองภายนอกอย่างชัดเจน

หากไม่มีกลไกนี้ Level 3 จะยังคงมองไม่เห็น ROI ยังคงเป็นแค่การประมาณ Required drivers ไม่มี feedback loop สภาพแวดล้อม high-transfer ของ Brinkerhoff ไม่มีวันเกิดครบ และ 20% ใน 70-20-10 ยังคงขาดการลงทุนและการวัดผล

องค์กรที่กำลังสร้างขีดความสามารถของผู้นำที่ยั่งยืน ไม่จำเป็นต้องรันโปรแกรมมากกว่าใคร แต่พวกเขากำลังสร้างสภาพแวดล้อม feedback ที่ดีกว่า — ที่ผู้นำเห็นตัวเองได้ชัดเจน และการพัฒนามีที่มี Value คุณค่าแบบ win-winกับทุกฝ่าย

ที่ Beaconex เราได้ทำงานกับ framework เหล่านี้ร่วมกับลูกค้ามาหลายปี หากสนใจพูดคุยเรื่องแนวทางการลงทุนพัฒนาคนในองค์กร หรืออยากเห็นว่า 360 feedback ที่ยึดโยงกับพฤติกรรมหน้าตาเป็นอย่างไรในทางปฏิบัติ ยินดีคุยด้วยเสมอค่ะ

💬 จาก 5 frameworks ใน series นี้ อันไหนที่เปลี่ยนวิธีคิดของคุณเรื่องการลงทุน L&D มากที่สุด?